We ran a blind test: 50 video scripts, half written by ChatGPT, half by professional copywriters. The results surprised us — and reveal important lessons about AI content creation.

The Test Setup

We created 50 short-form video scripts on business topics. 25 were written by experienced copywriters, 25 by ChatGPT with optimized prompts. We then had 200 marketers rate each script on engagement potential, clarity, and persuasiveness — without knowing which were AI-generated.

The Results

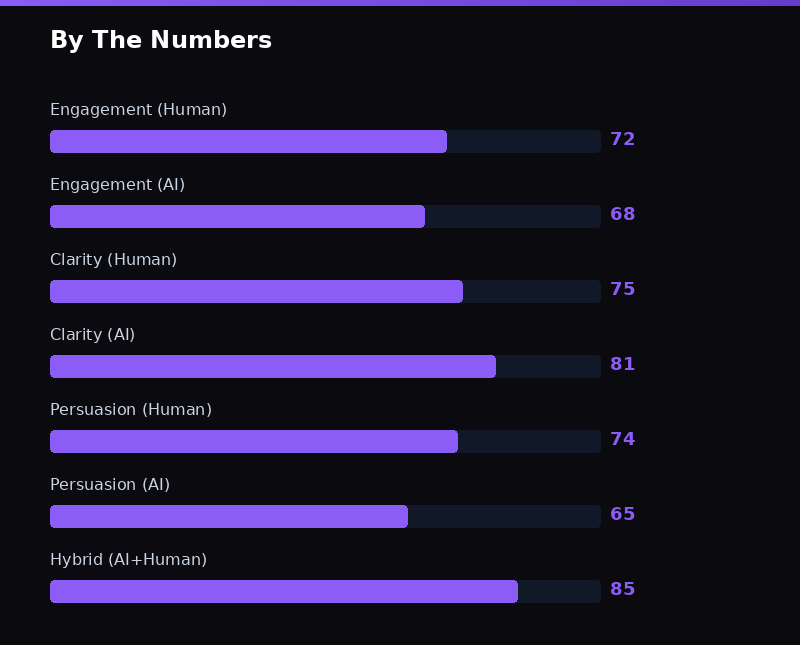

Engagement potential: Human scripts scored 7.2/10, AI scripts scored 6.8/10. Close, but humans had an edge in creativity and unexpected angles.

Clarity: AI scripts scored 8.1/10, human scripts scored 7.5/10. AI excels at clear, structured communication.

Persuasiveness: Human scripts scored 7.4/10, AI scripts scored 6.5/10. Humans better understood emotional triggers and audience psychology.

The Hybrid Approach Wins

The real winner? Neither pure AI nor pure human — but the combination. AI-generated scripts edited by humans scored 8.5/10 across all categories. This is exactly why platforms like zSellify use AI for generation but apply human-designed templates and hook formulas to guide the output.

The future of content creation isn't AI replacing humans — it's AI handling volume while human creativity guides strategy. Try our AI Content Detector to see how detectable AI-written content really is.